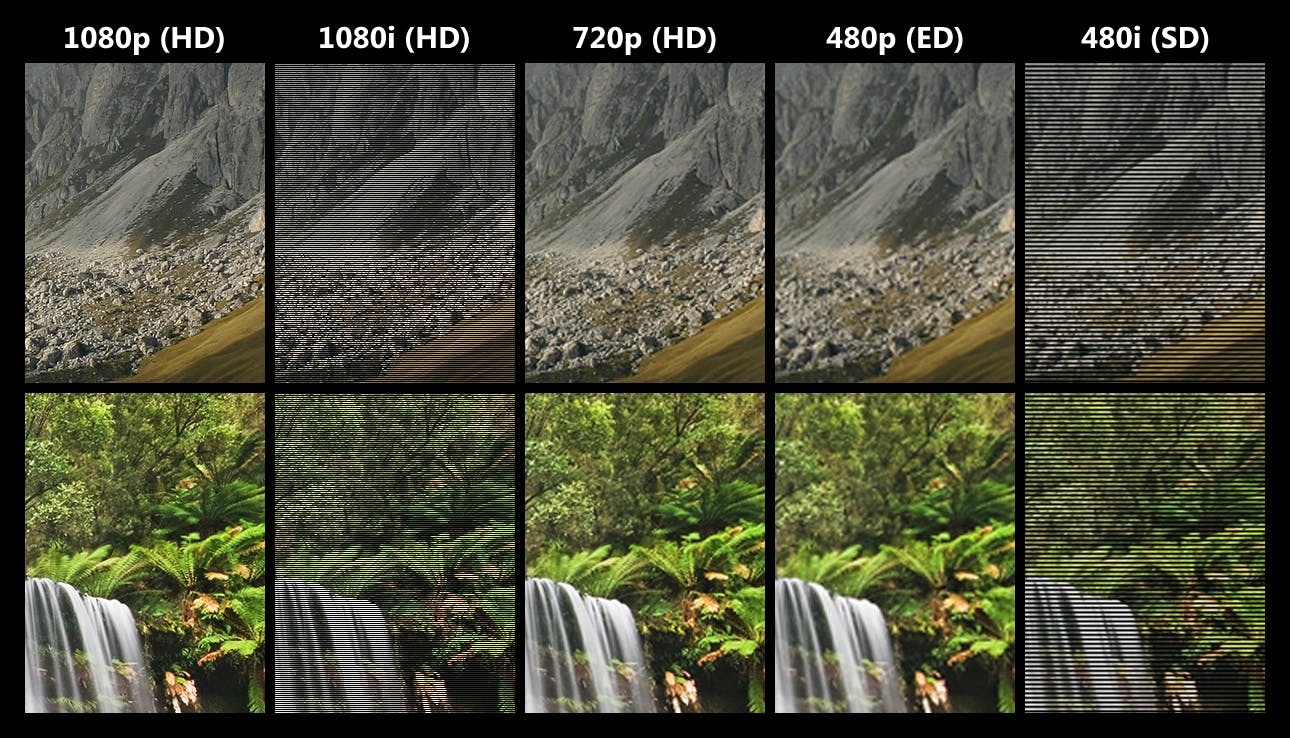

Interlaced footage is instantly recognizable by the horizontal scan lines crisscrossing jaggedly across its surface, causing distortions to the scene being filmed. If you’ve spent any amount of time watching videos — especially older footage — you’ve probably come across it multiple times.

German filmmaker Volker Wischnowski recently used Pixop’s AI Deinterlacer as an easy, affordable and quick way to deinterlace footage from some parts of his film Hurtigruten.

“Hurtigruten was filmed in several installments and I changed my filming technique frequently. In the earliest iteration, I was shooting in 50i and needed a way to convert that footage into progressive because I couldn’t reshoot it given the nature of the subject matter. It was integral to the film, so I had to figure out a way to fix it,” he explained when we sat down with him to talk about his experience with Pixop. “Your deinterlacer did a very good job of deinterlacing it, with very little effort needed from my end.”

Before we dive into Volker’s story, here’s a quick rundown of what interlaced footage is and what it means to deinterlace it.

Interlaced footage

Interlacing was a technique that was invented and popularized before the advent of digital streaming almost 70 years ago. It’s most commonly associated with television video formats like NTSC and PAL.

Essentially, interlacing was an early form of video compression that was used as a way to save on bandwidth while making the footage being broadcast appear as smooth as possible. In other words, it is a “technique for doubling the perceived frame rate of a video display without consuming extra bandwidth.”

This was achieved by ‘breaking’ each frame of the video up into two sets of ‘lines’ (odd and even) taken from slightly different fields shot consecutively. During the broadcasting process, one set of lines would be sent to viewers 1/60th (for NTSC) or 1/50th (for PAL) of a second faster than the second set of lines. This process generated smooth motion — at least to the naked eye (you can thank the persistence of vision phenomenon for this) while being less data-intensive than other broadcast methods at the time. A win-win, in other words.

However, interlaced footage is a problem when you try to deliver that feed to a progressive source because interlaced footage requires a display that is natively capable of displaying the two fields in a sequential manner. Progressive monitors are not designed to do this, which is what causes the conspicuous scan lines. Therefore, to properly display interlaced footage on progressive screens, deinterlacing must first be applied.

Deinterlacing

While older screen technology like ALiS plasma panels and old CRTs were designed to display interlaced footage, newer screens are based on LCD, OLED, QLED, or AMOLED technology which mostly use progressive scanning. Therefore, interlaced footage must go through a process known as deinterlacing before it can successfully be screened on progressive screens.

Deinterlacing is the process of converting interlaced video into a non-interlaced or progressive format, which requires recombining the two fields (odd and even) of interlaced video in some way. There are a few methods of achieving this, including field combination deinterlacing, field extension deinterlacing and motion compensation deinterlacing. Here’s a brief explainer on each:

Field combination deinterlacing: In this method of deinterlacing, the even and odd fields are combined into one frame. This retains the vertical resolution, but it halves the perceived frame rate. This may cause the newly deinterlaced footage to lose the smooth, fluid motion of the original. There are several different techniques used, including weaving (performed by weaving the consecutive frames together into a single frame. However, it can cause artifacts called ‘combing’ where the pixels in one field do not line up with the pixels in another), blending (achieved by averaging consecutive frames to be displayed as one frame. However, it can lead to an artifact known as ‘ghosting’) and selective blending (which is a combination of the two previous techniques), amongst others.

Field extension deinterlacing: In this method, each field (with only half the lines) is taken and extended to fit the entire screen and make a frame. This halves the vertical resolution of the image, but it does maintain the original frame rate. Some of the techniques used are half-sizing (where each field is displayed on its own, resulting in a video with half the vertical resolution of the original, unscaled) and line doubling (this takes the lines of each of the interlaced fields (odd or even) and doubles them. This prevents combing artifacts and maintains smooth motion, but can cause a noticeable reduction in picture quality).

Motion compensation deinterlacing: This method provides the best result, but also requires the most processing power. It works by combining traditional field combination methods and frame extension methods to create a high-quality deinterlaced, progressive video. The best algorithms try to predict the direction and amount of image motion between subsequent frames in order to better blend them together and produce the most natural, high-quality result possible.

Pixop’s AI Deinterlacer

A side-by-side comparison of ground truth, interlaced and deinterlaced footage using Pixop's AI deinterlace filter.

Pixop’s AI Deinterlacer uses motion compensation deinterlacing. Based on AI and deep learning, our deinterlacer has strong anti-aliasing capabilities and transforms video into progressive form while maintaining definition and details.

Features

- Our deinterlacer harnesses the learning power of deep neural network architecture to enhance the quality of video.

- It deinterlaces the displayed source video frame in any resolution up to UHD 4K.

- Enjoy an output image that benefits from significantly less aliasing along edges over classic algorithms.

- It is carefully designed to reduce the undesirable temporal shimmering artifacts called "twittering".

- It is tested to produce good results on a wide range of different genres such as entertainment, sports, action and documentaries.

- It can reduce spatial aliasing, moiré patterns and interline twitter (shimmering)

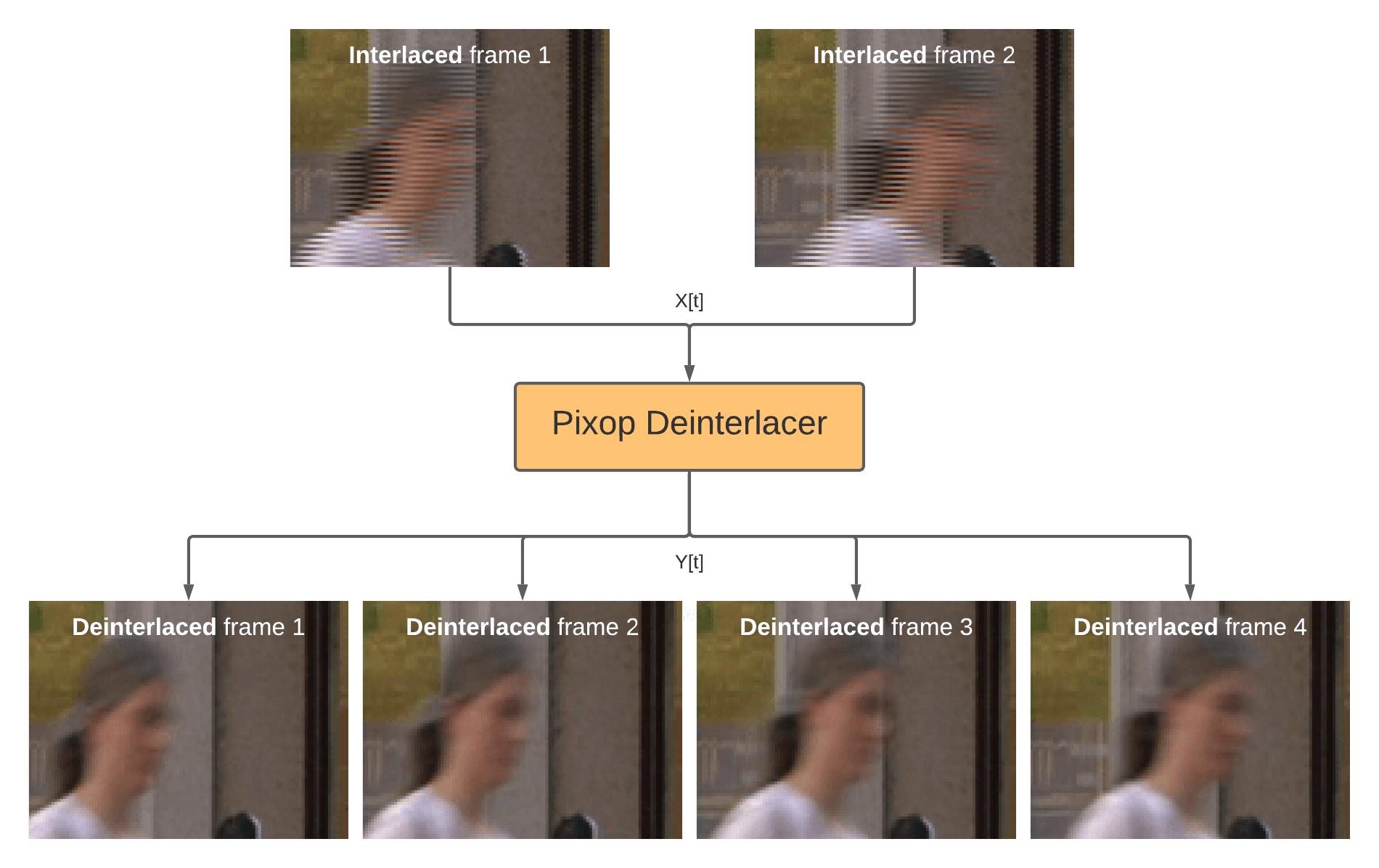

How it works

The deep convolutional neural network architecture it is based on uses a combination of spatial and temporal filtering to learn how to spatially deinterlace frames and how to optimally combine the effects of motion and temporal imperfections to generate the deinterlaced output.

Video is processed in blocks of two interlaced video frames as input. An enhanced block of four deinterlaced frames is produced via inference using our pre-trained neural network model as shown in the diagram below. This type of multi-frame approach is common among deinterlacing algorithms as it allows better filtering to be achieved for regions in a frame with little or no motion.

How Pixop's AI deinterlace filter works.

Deinterlacing with Pixop

We recently sat down with Volker Wischnowski to ask about his experience using Pixop’s AI deinterlacer. What follows below is the edited and condensed transcript of that conversation.

Tell us a little bit about yourself and how you got your start in filmmaking.

I’ve always had a love of cinema, something I got from my father. He was a big film buff and made home movies using an old analog Super 8 camera. He definitely inspired that same love of movies in me. As I got older, I went into the finance field, working in a bank for many years. But my penchant for filmmaking never quite left me. I had a mentor who worked at Northern German Broadcasting (a TV and radio station) who got me into editing. In 2007, with his help, I began directing my first movie, ‘Way of St. James’ in Spain, which sold about 75,000 copies — one of the two best-selling films from that publisher. I also showed the movie in theatres with live narration. I loved the experience and wanted to make my second film, Hurtigruten.

Hurtigruten (Credit: Volker Wischnowski)

Tell us about Hurtigruten and the process of making the movie.

Hurtigruten is a coastal ferry service along the coast of Norway. I wanted to make a movie about the journey, which doesn’t take nearly as long as some of the other trips (like some around Canada) I had considered making movies on, so I decided to take the plunge. I was still working in the bank at this time, so I waited until 2013 when good weather and a wonderful summer in Norway made it possible for me to shoot.

It took four months to complete the first iteration of the film and I began to screen it in 2014. I made the money I had invested back along immediately — within nine days or so. I wanted to make the film better, so I reinvested the money and went back to Norway in the summer of 2014 to work on improving the film. I added about seven minutes more — which took almost double the amount I had spent for the first 60 minutes! But the film became even more successful as a result. It was screened in many cinemas with an audience of hundreds of people. Since then, I’ve been back to Norway many times to continue improving the film with scenes from the country as well, not just the ship. I’m also a huge fan of the Marvel movies and the cutscenes they insert after the credits roll, so I’ve also included that in the latest version. The entire experience has been a blast and very rewarding to work on.

Were there any issues along the way that meant you needed a solution like Pixop during post?

Yes, it’s primarily because of how many iterations the film has gone through and in how many different parts it has been shot. My technique has evolved a lot during that time. As I started to show the film on bigger and bigger screens, something was really bothering me: when I started shooting in 2013, I was shooting in 50i. I switched to 4K 25p in 2014. So, on bigger screens, it’s very obvious a lot of the older footage is interlaced. I needed to find a fix and tried a few different solutions, without much luck. Then I stumbled across Pixop looking up ‘deinterlacing’ on Google and decided to give it a shot. And wow, it worked extremely well! I’m very happy with the result — I recently screened the film in Hamburg on a 12m screen and could really see a difference. A few bits were still a little choppy, but the results were far superior to anything else I tried.

Before versus After footage deinterlaced with Pixop's AI Deinterlacer

How did you find the process of using our deinterlacer?

It was great! I particularly liked how Pixop is cloud-based and very intuitive to use. It made the work easy and quick, which I appreciated. I had to process the footage in two steps to get the best result possible: first, by using your deinterlace filter and then applying motion blending, which you also offer. It worked out really well and I’ll definitely be using it in the future if I ever need to.